Facebook, LinkedIn and other social media outlets have been an interesting intersection of people in my life…those that have only known me as hearing, only known me as deaf and those that have known me throughout this whole hearing loss journey. Recently, I’ve felt this tug to discuss the emotional and social barriers that come with having hearing loss.

Being late-deafened, I have the perspective of having had normal hearing, being hard of hearing and now deaf. Just like the Kübler-Ross model for the stages of grieving the death of a loved one, there are Stages of Grieving Hearing Loss. This process not only affects the individual but also the family. Just as someone grieves the loss of a loved one, people who experience some sort of illness grieves the loss of their healthy self. Even now, eighteen years later, I sit here and think, “Wow. I’ve been deaf for eighteen years now.”

The purpose of this blog post is to address those unasked and unanswered questions, those awkward-away glances and yes, even those looks of pity or yearning for days when I was still hearing…those things that I know others have always wanted to ask but felt uncomfortable in doing so. I guess this is my way of expressing myself because I’ve had difficulty in acknowledging that elephant in the room…

No need to say, “I’M SORRY!”

I realize that some people say this automatically in response to certain situations. I find that this is a similar reaction that people might have when they find out someone has died, or gotten cancer, or lost their job, or…_______. Look through social media and if someone posts with sad/bad news, there are often apologies abound. I know that you know that it’s not your “fault”.

Along the same vein, to my friends with hearing loss: We don’t need to start our sentences with, “I’m sorry” either. As a female, I am very attuned to the fact that I often start sentences with this phrase (and I’m not the only one according to this article) and I’m trying not to. As a double-whammy, I used to say, “I’m sorry…I’m hard of hearing, can you please repeat what you said?” This is a habit that’s hard for me to break but I keep at it!

Do say, “What can I do so you can hear better?”

Here are some strategies that help me:

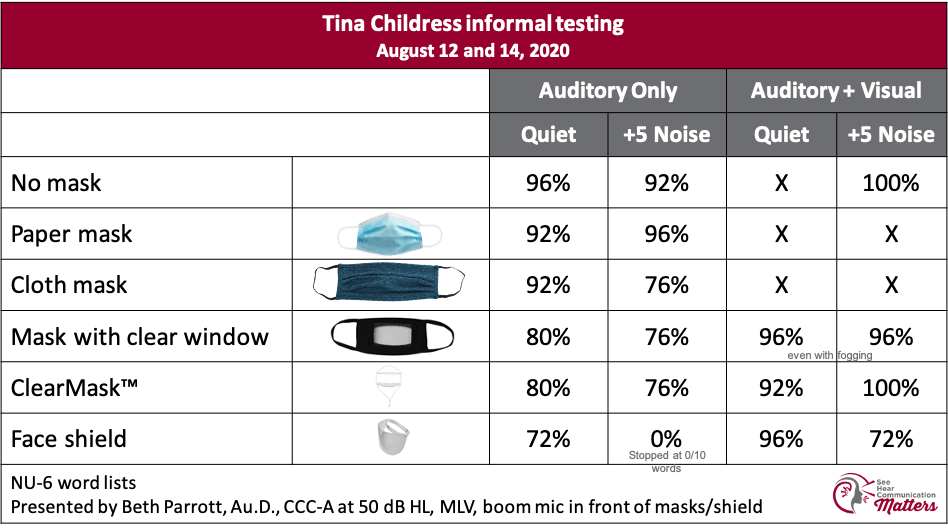

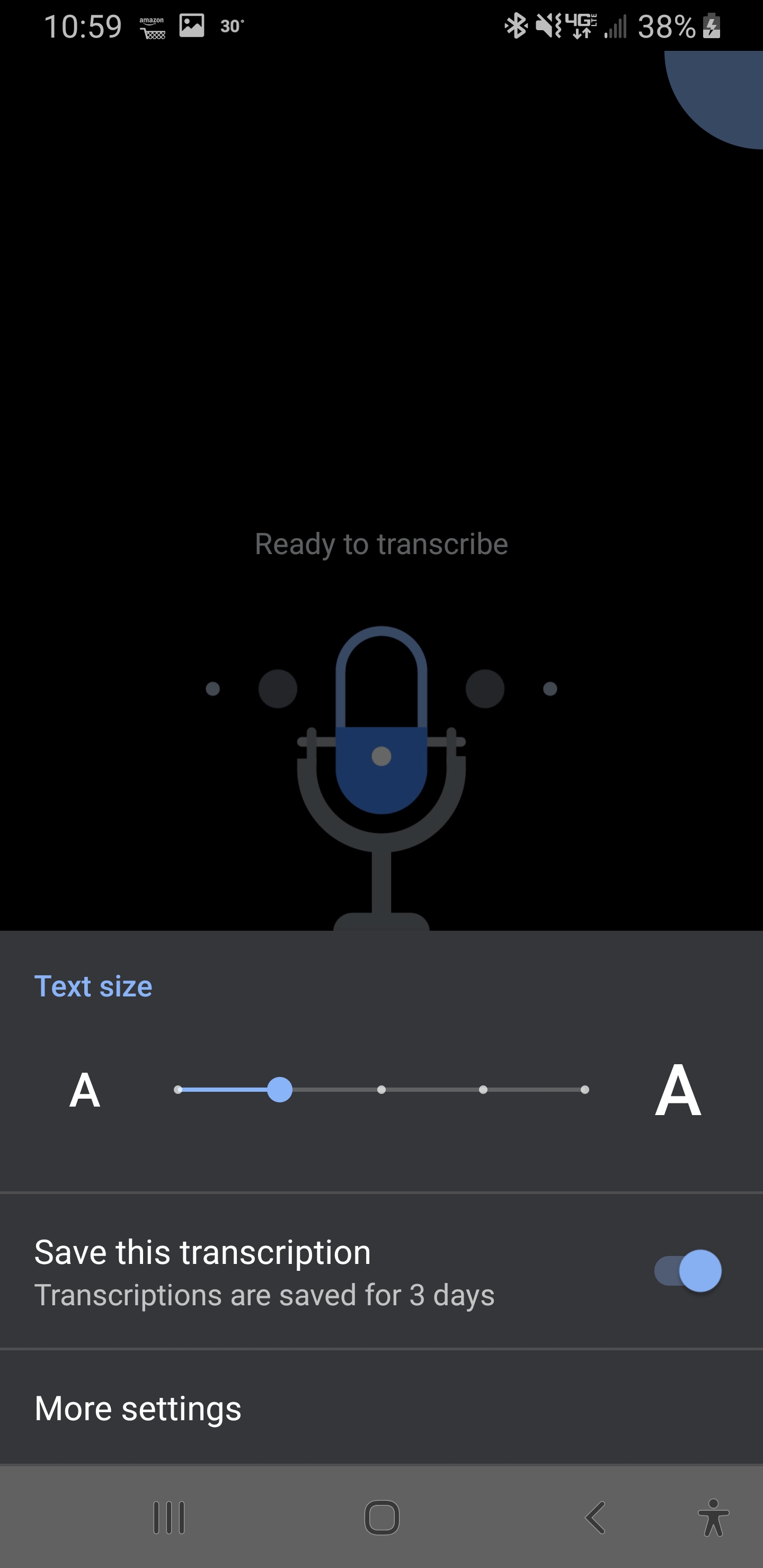

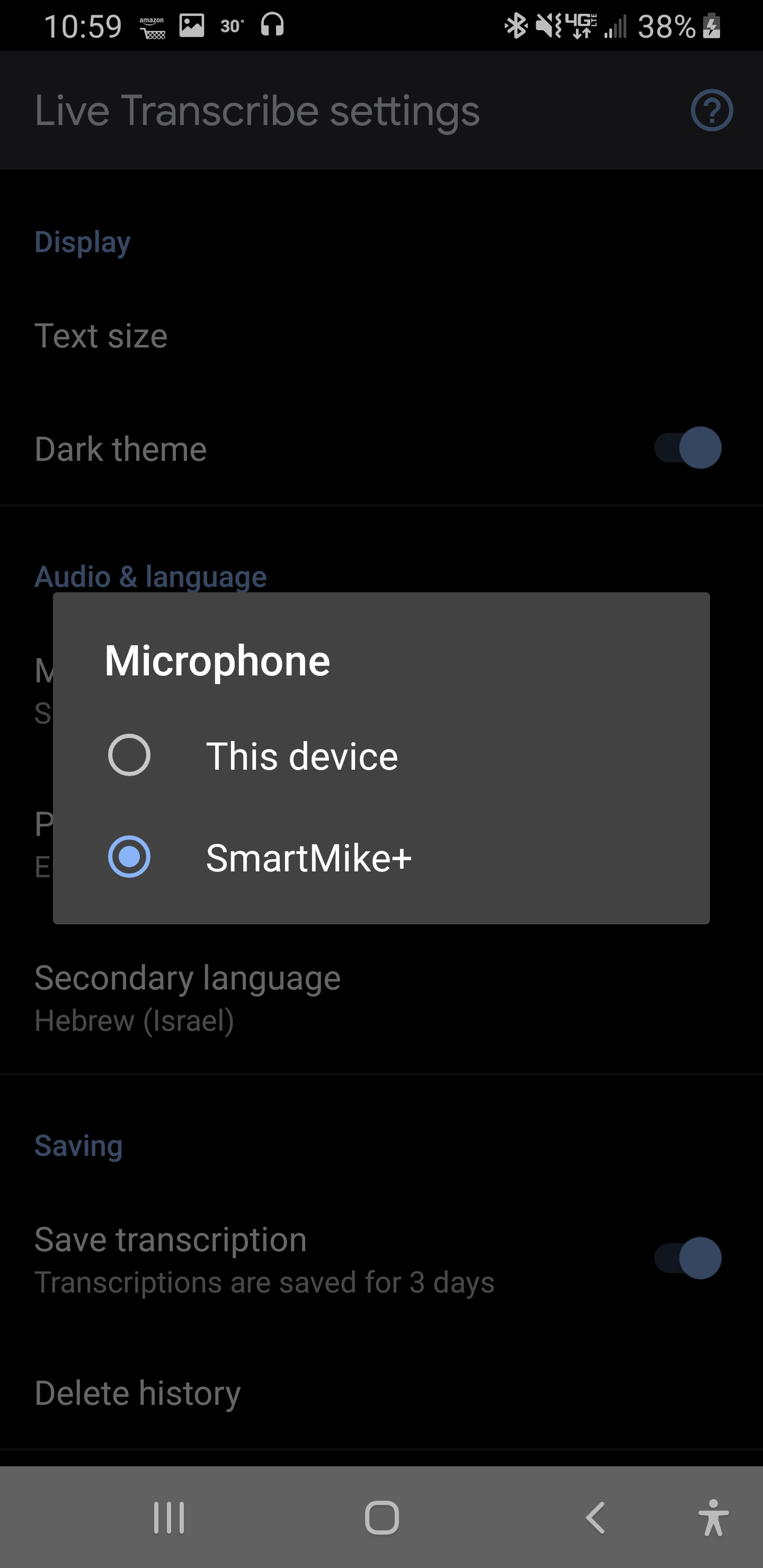

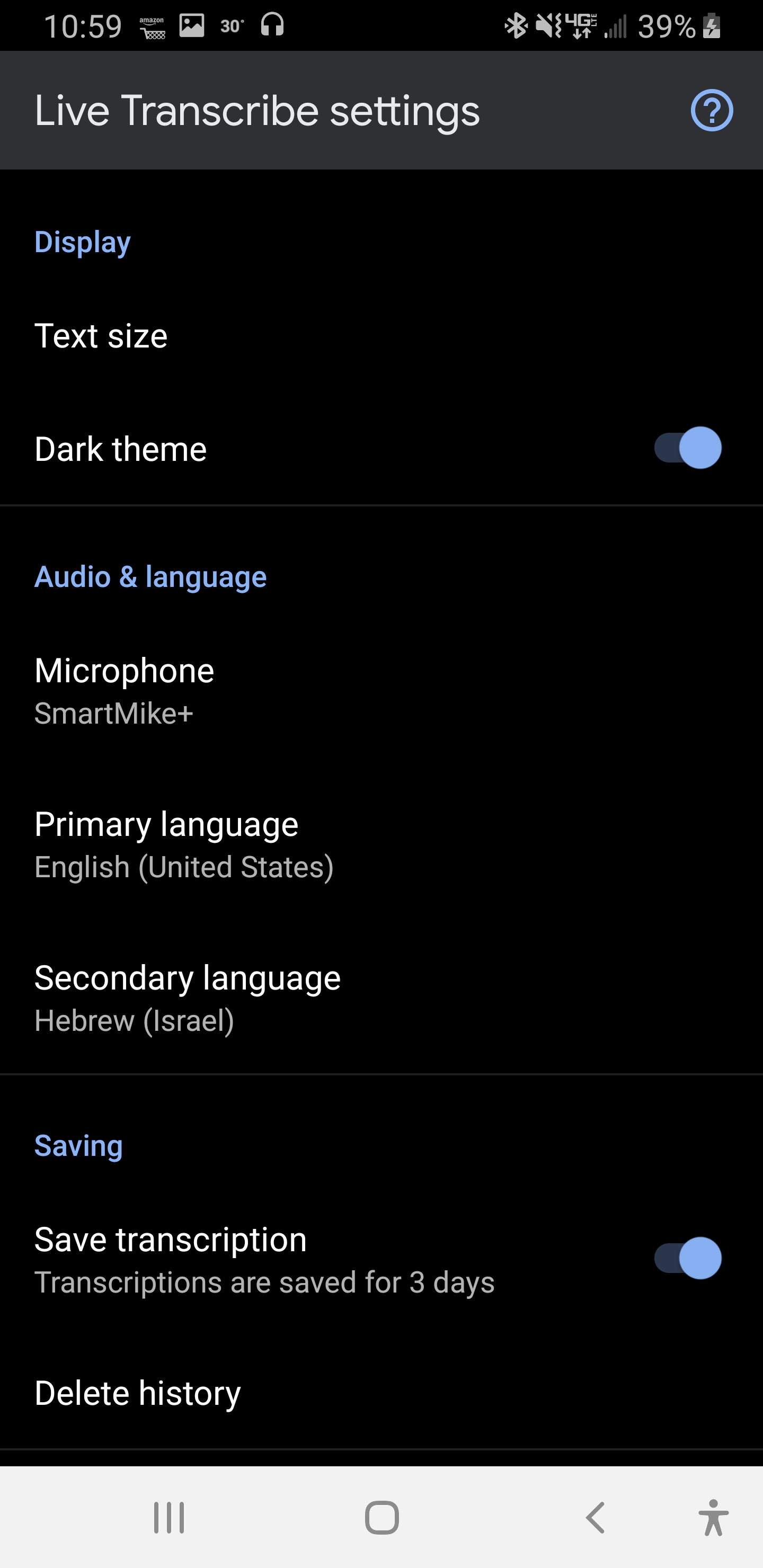

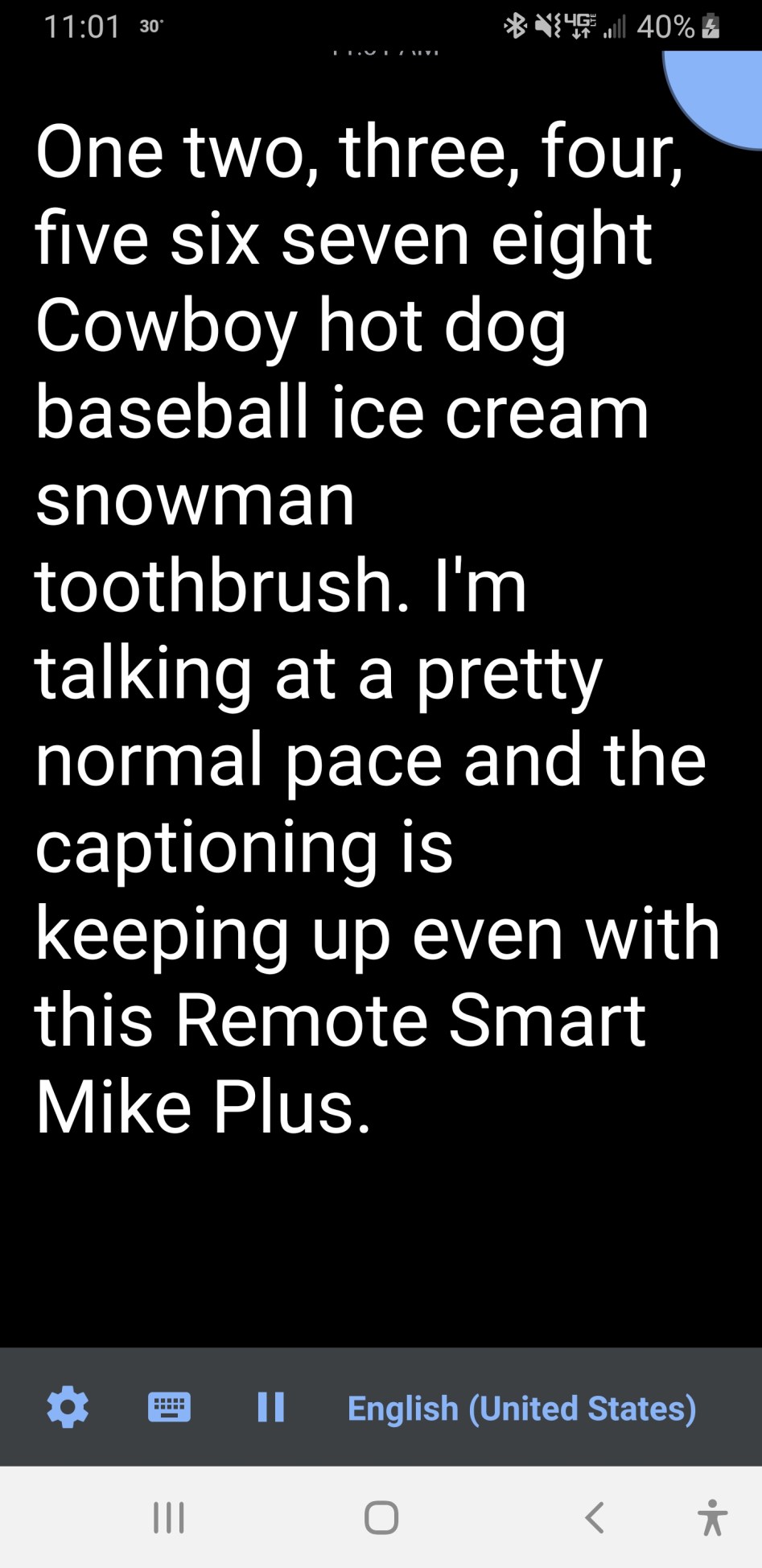

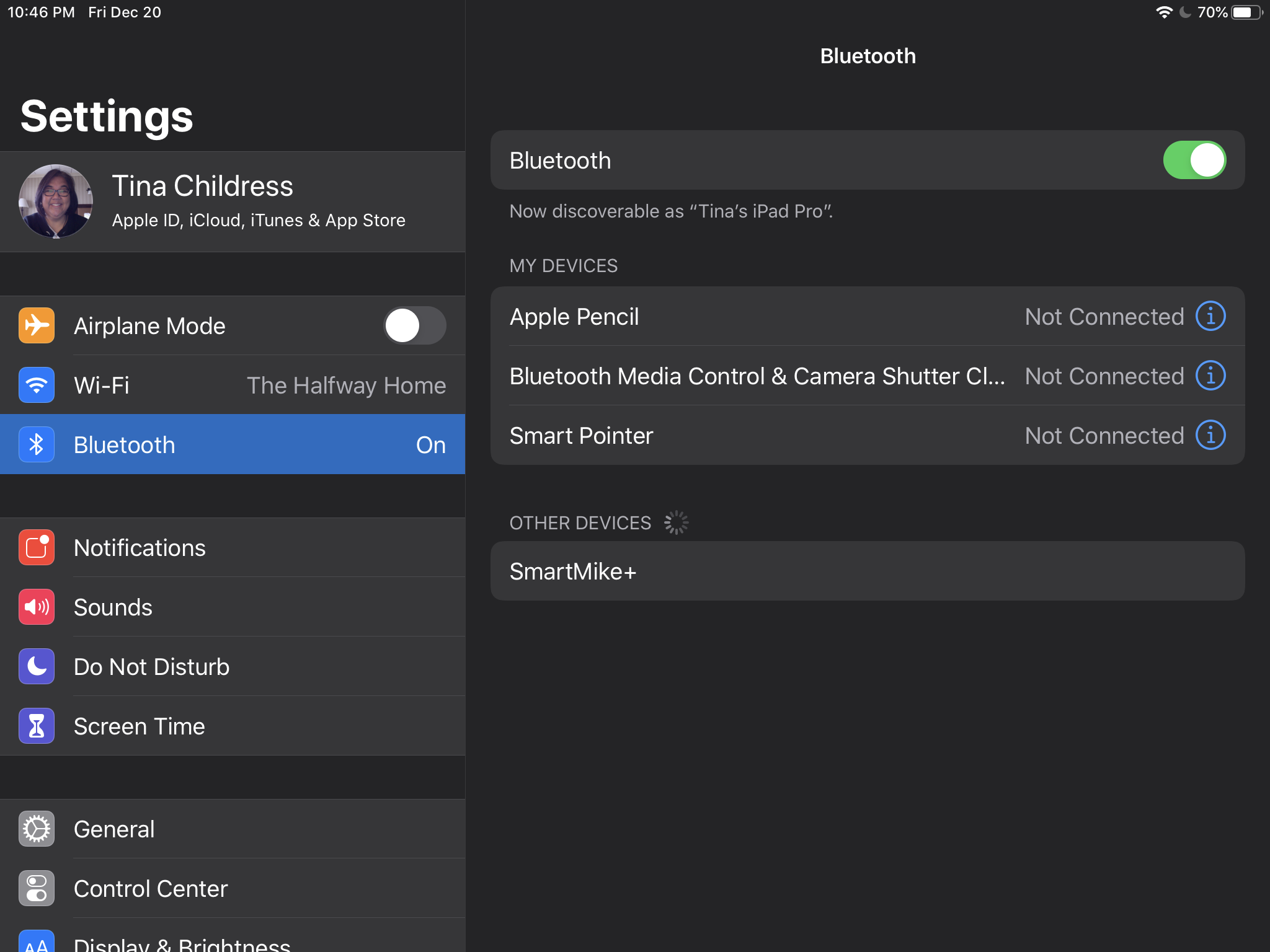

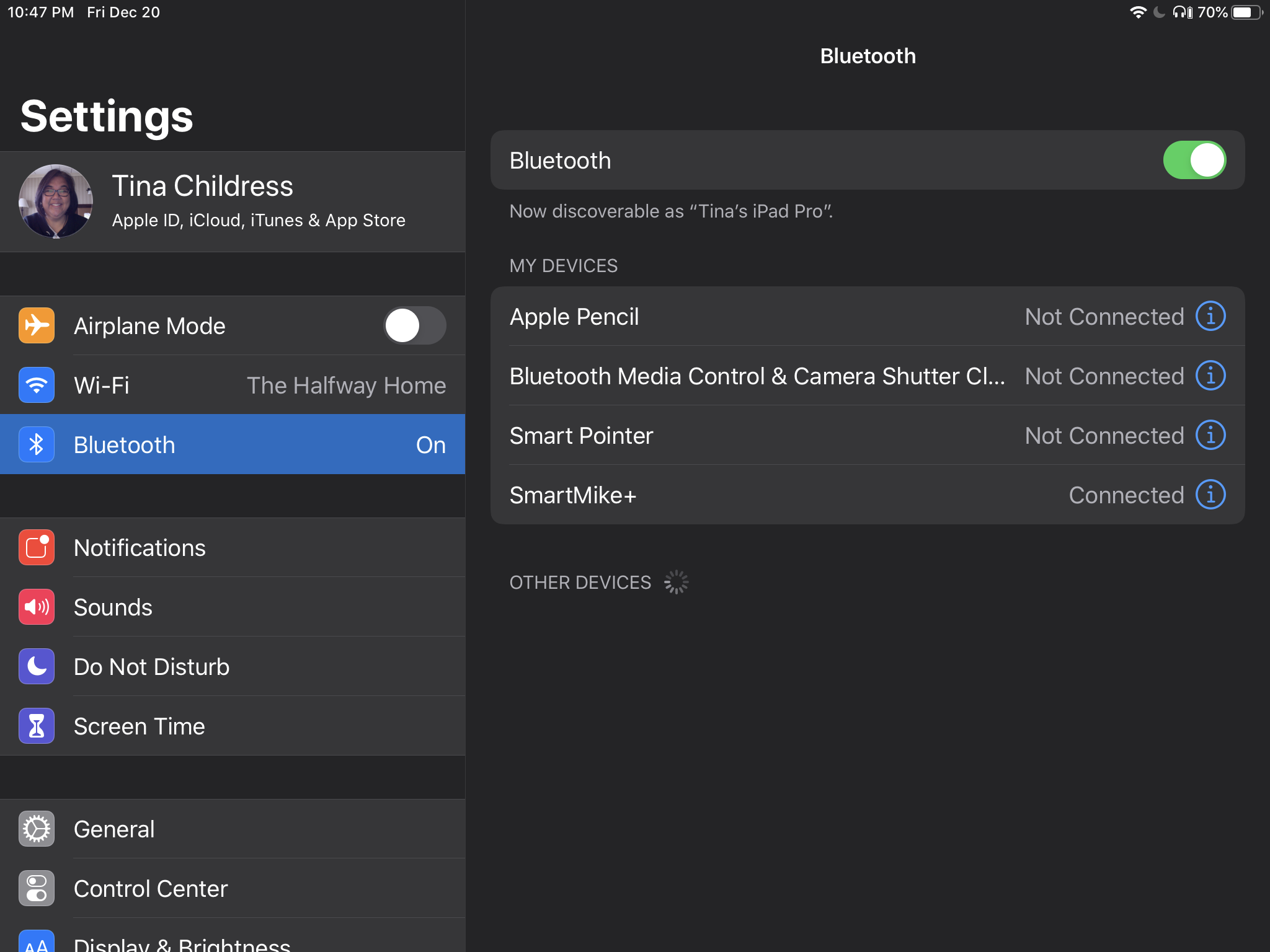

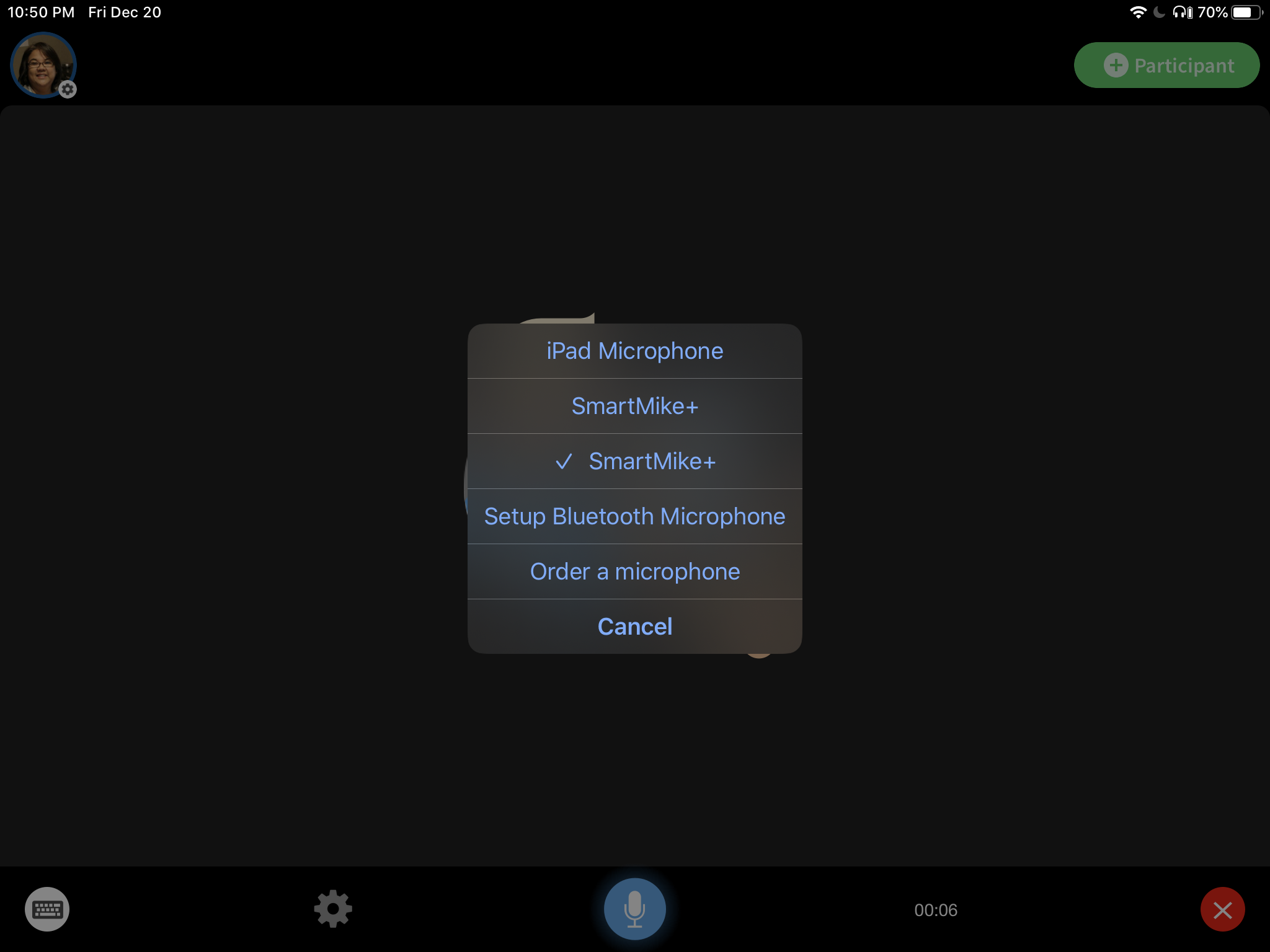

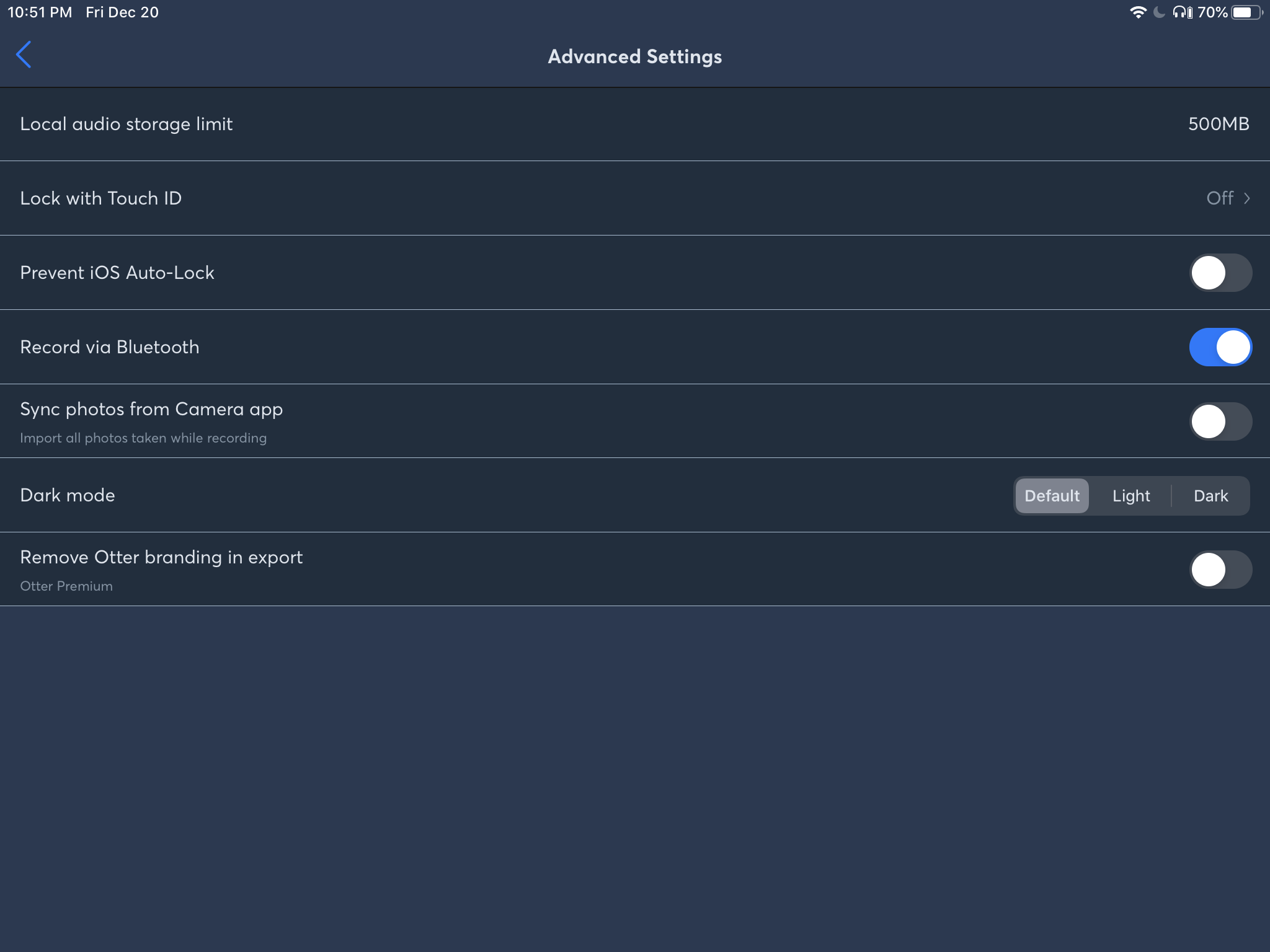

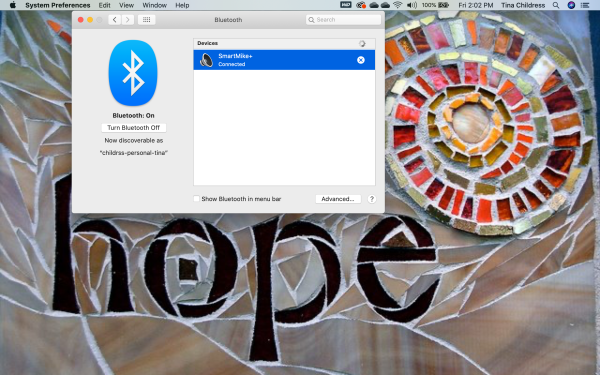

- Going someplace quiet(er) – Noise is the inherent enemy of all people with hearing loss (and even people with normal hearing). Look around a noisy restaurant and you see people more intently staring at each others’ faces, leaning in closer or even trying to block out noise with their menu. For those of us with hearing loss, it takes a lot of work to hear in these situations. Some people are good lipreaders and some are not. Some people try to compensate in noise by changing programs on their amplification or even using hearing assistive technology (consists of a microphone worn by the talker and a receiver worn by the person with hearing loss). I love my friends who ask the manager to turn down the music! If we’re still struggling, consider moving tables, locations or even going to quiet hallway when you need to have a conversation.

- Having good lighting – It’s hard lipreading in the dark!

- Repeating what you said – Sometimes I just wasn’t ready to hear what you said or I heard the end but not the beginning. Also, there are some words that I might not hear so well in a noisy place so using different words can help (e.g., the office –> the room in the school where you work). Please be patient.

- Let me know if the topic changes – If I miss something (because I didn’t hear it or because I looked away, etc.) which causes me to miss part of the conversation, I may never get back to the topic. LOL I really appreciate my friends who often start their narratives with “changing topic now…”.

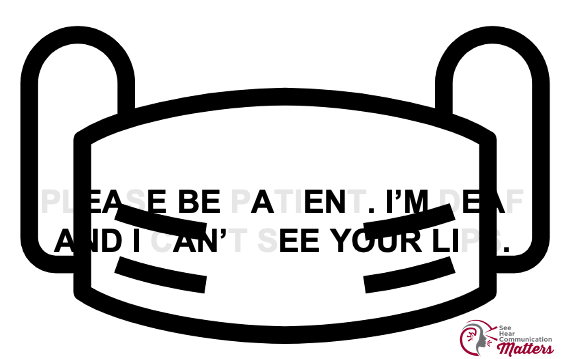

- Face-to-face communication – Please don’t try whispering (or shouting) in my ear. One of my favorite sayings is, “Eye contact before ear contact.” I need to be able to see your face/eyes for speechreading and this doesn’t happen if you’re blindsiding me. If you’re holding something in front of your face (like a menu) or you’re not facing me, then I get lost, too. Have you ever heard someone say, “I can’t hear you! I don’t have my glasses on!” It seems somewhat counterintuitive but actually, this statement goes to point out how we need visual cues (including using our glasses) to understand someone better.

- Indicating who is talking – I love it when I’m in a group and someone nods, waves or raises their hand when they want to say something. That makes it easier for me to know who is talking rather than looking for whose lips are moving.

- If you talk fast, please slow down – You don’t have to talk super slow but just not really, really fast.

- Knowing sign language – I learned sign language as an audiology student not knowing that I would need it to survive later when I became a late-deafened adult. If you know it, let’s use it! If you want to learn it, let me know and I’ll point you in the right direction. This is my preferred method of communicating in loud environments.

What does it sound like for me in noise?

I really like the sound simulations (and accompanying visual representation) on this site. If you listen to “Restaurant” –> “Mild” or “Moderate”, then you can get a sense of what it’s like. It’s hard work hearing and listening!

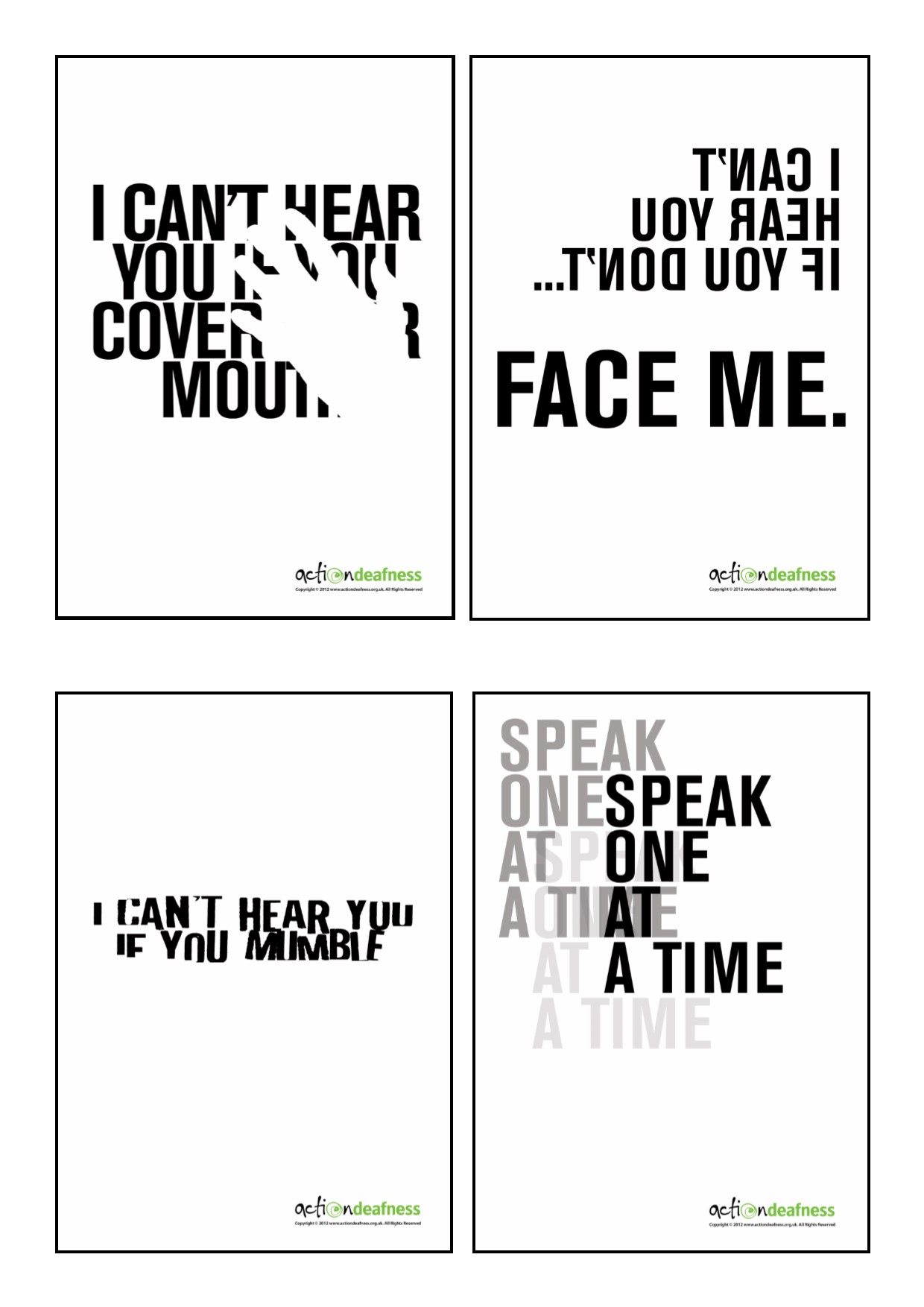

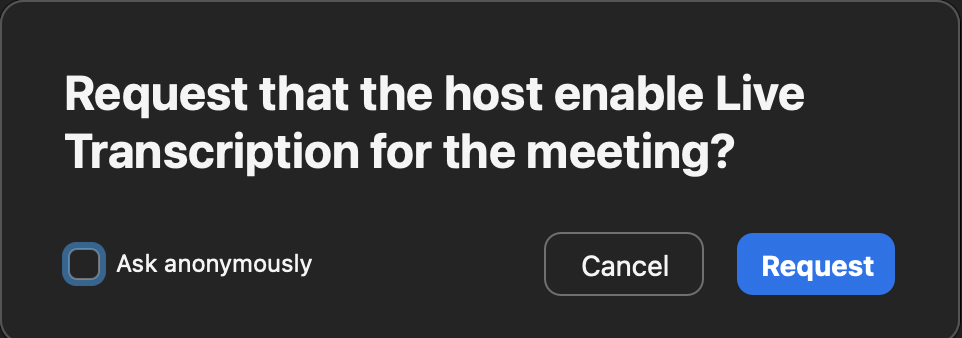

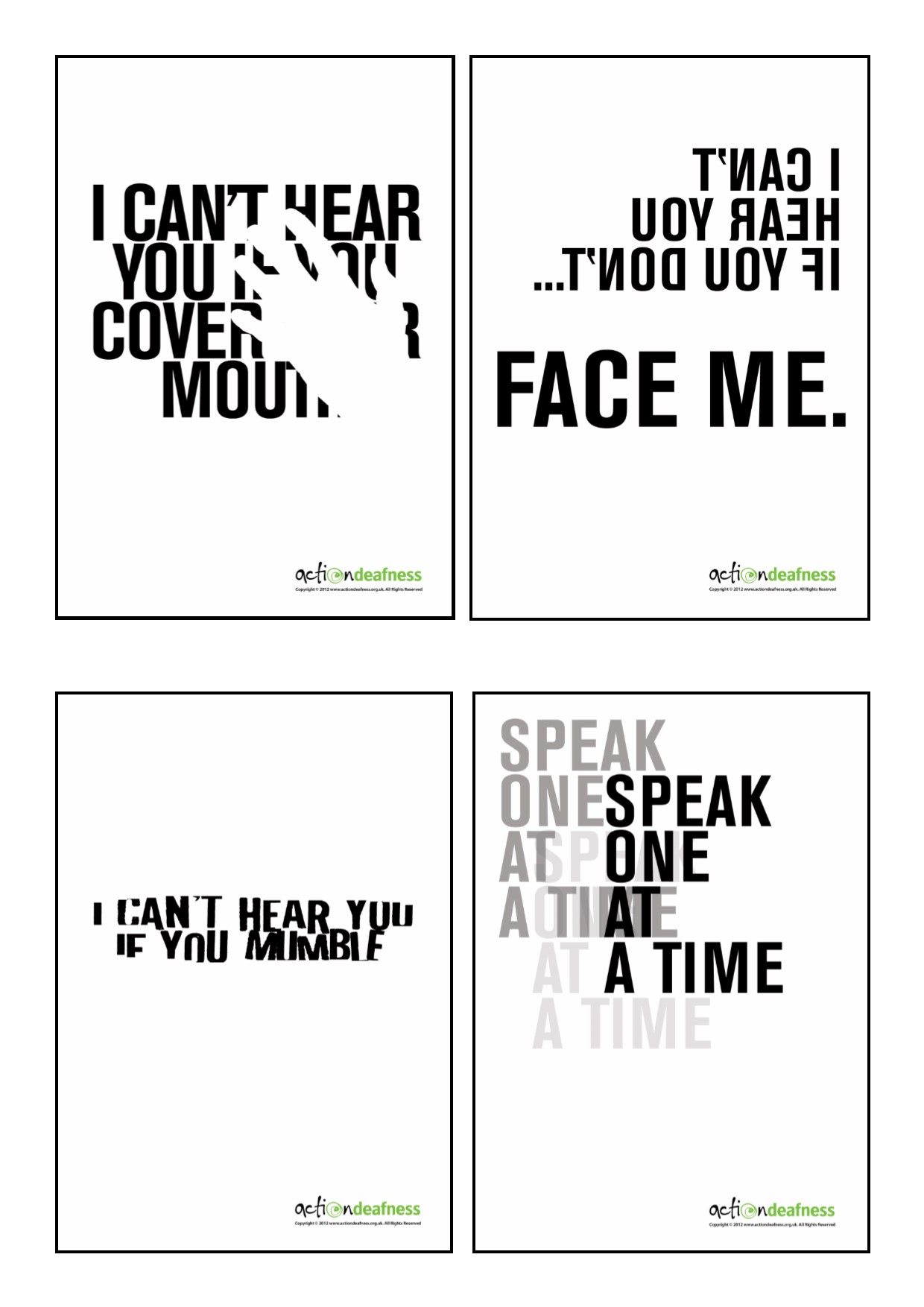

More visual representations!

I really, really love these Deaf Awareness posters from Action Deafness. So much so that I purchased a few sets of them (since they had to be shipped from the UK anyway) and had them framed in the hard-to-find A3 frame size. So simple, yet so profound in the way they represent what living with hearing loss is like!

Sometimes I act like I heard you when I didn’t, really.

My good friend, Karen Putz, talks about social bluffing especially as it pertains to children (she has three deaf/hard of hearing kids). It can definitely apply to adults, too…

I might pretend like I heard you because I don’t want you to know that I’m deaf (though THAT cover is blown now that you’re reading this blog post). When I meet someone for the first time or I think that I’m only going to have casual conversations with them, I don’t introduce myself as, “Hi! I’m Tina Childress…and-I’m-deaf-and-I-have-cochlear-implants-that-help-me-hear-so-please-face-me-when-you-talk.” TMI. The facts that my speech is largely unaffected and you can’t see my cochlear implants (because I’ve had essentially the same haircut since I was 12) help me “pass” as a normal hearing person. Most people never even know that I’m deaf. Someone once compared it to having “hearing privilege”. So true.

I think this strategy has a few purposes:

1) I don’t want you to judge me and my intellect based on my deafness but rather my accomplishments. Unfortunately, there are too many negative stereotypes of people who are deaf as being “less than”.

2) I don’t want to have to go into my personal story.

3) I don’t want to feel pity (people get a “look” that I immediately recognize as this) nor do I want to be seen as inspiration porn.

4) Asking for you and others to repeat gets tiresome (for both you and me)

I would rather be known as “Maddy and Mia’s mommy” or “Matt’s wife” or “_______’s friend” or “that educational audiologist” as opposed to being “Deaf Tina” but I know that this is unrealistic because that is who I am, too….but it doesn’t define me other than my communication needs but it has shaped me in terms of my experiences.

So, yeah, sometimes I’ll nod my head in agreement, smile or laugh (just a half second later than when everyone else is smiling or laughing) so I don’t get called out for my bluffing because unless I am purposefully included, it is what it is. I don’t expect special treatment all the time, especially if I don’t disclose, but I do find myself gravitating towards friends who do this automatically and don’t have to be reminded.

Oh, and sometimes, I act like I heard you when I actually misheard what you said because, well, #Ihavehearingloss. 🙂

“Never mind” and “I’ll tell you later” are phrases that hurt.

“Never mind” implies that even though something was said, you are making the judgment for me that you don’t think it is something I would want to know.

“Later” is too late usually. The moment is gone.

How well do I hear with my cochlear implants anyway?

So much better than I did with my hearing aids but I’m not cured – they are tools to help me hear. Noise is hard. Music is hard. I can do it but I just have to work at it.

What I hear sounds “normal” just sometimes it seems muffled or soft or distorted. My mom sounds like my mom. If I’ve known you a long time, I hear in my head what I remember you sounding like. It didn’t start out that way automatically…it was also like learning a new language, like I learned ASL. I had to work hard to train my brain to make sense out of the very new sounds.

Can I talk on the phone?

Yassssss! For short to medium length conversations, if it’s quiet on my end and quiet on your end and we have a good, strong signal, I do pretty well. Throw in some adverse listening conditions, poor phone connection or multiple people talking at a time and it becomes exponentially harder. It’s not my favorite way of communicating because I’m always afraid I’m going to miss or misunderstand something (also known as “phone anxiety”, a common feeling amongst people with hearing loss) so I’m glad that so much of the world has transitioned to text based communication (e.g., text, email).

Why do I use sign language so much when I have excellent speech and can hear well?

…because I can! I feel very fortunate that I learned ASL, kept up with my skills, embraced the Deaf community, can sign with my hubby and kids and became fluent. I know learning a new language does not happen easily for others.

Using ASL really depends on the situation and who I’m with. When I’m with my hearing families (e.g., my parents) who don’t sign, obviously I won’t sign with them. When I’m with my hubby and kids, we sign because we all can sign and we all recognize the benefit of signing in noisy places, at a distance, when we don’t want someone else to understand what we’re saying…

I also use sign language interpreters in situations where there are groups of people that I want/need to hear and events like conferences. Listening in noise and listening to speech amplified through speakers is difficult. If I sit close, lipread, maybe even use assistive technology, I can probably get 85-90% of what was said.

That’s not good enough for me. I want to get it all and I want to know who is talking and I want the information almost simultaneous with the speech and I want to be able to interact with my communication facilitator (i.e., interpreter).

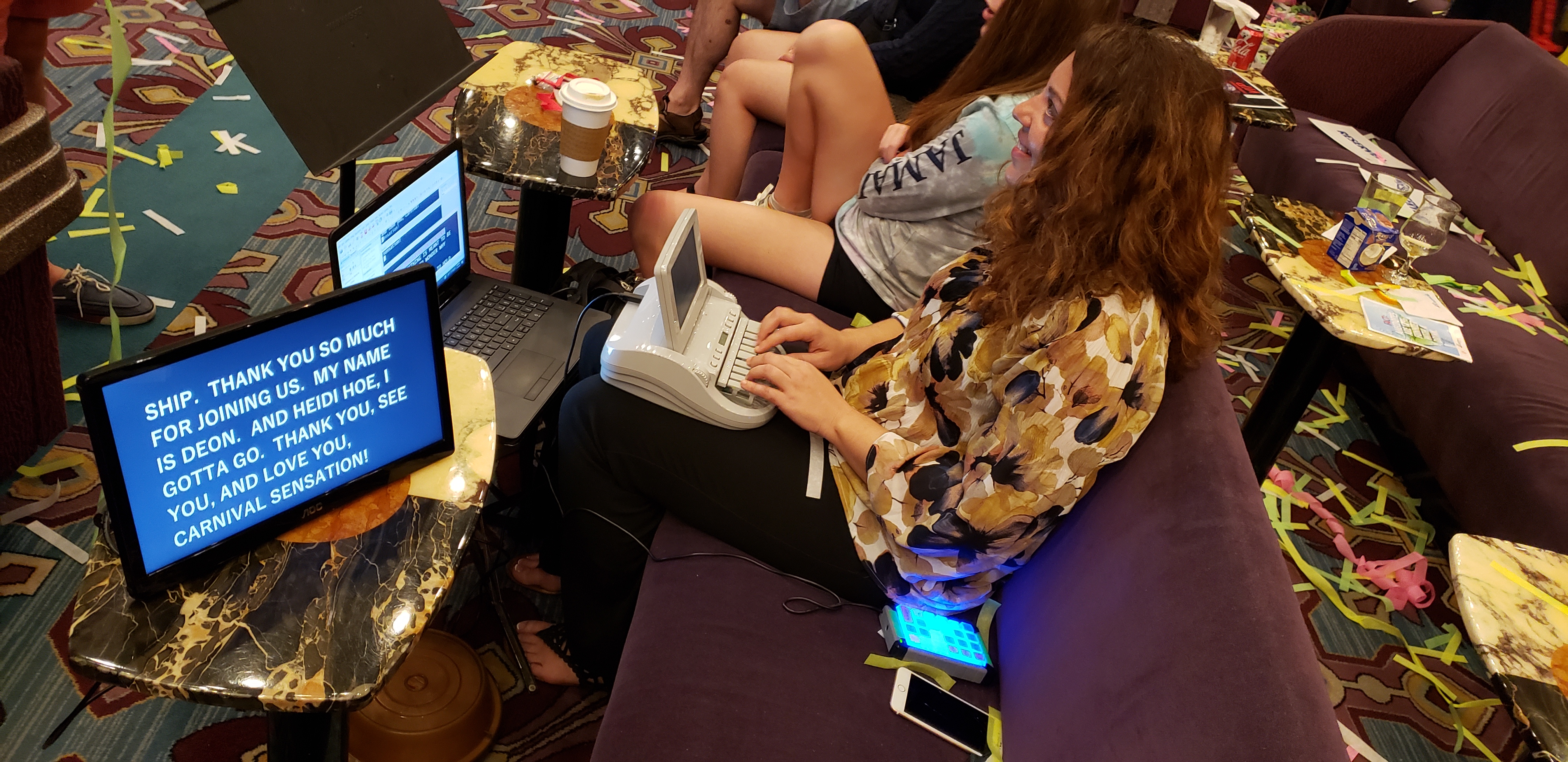

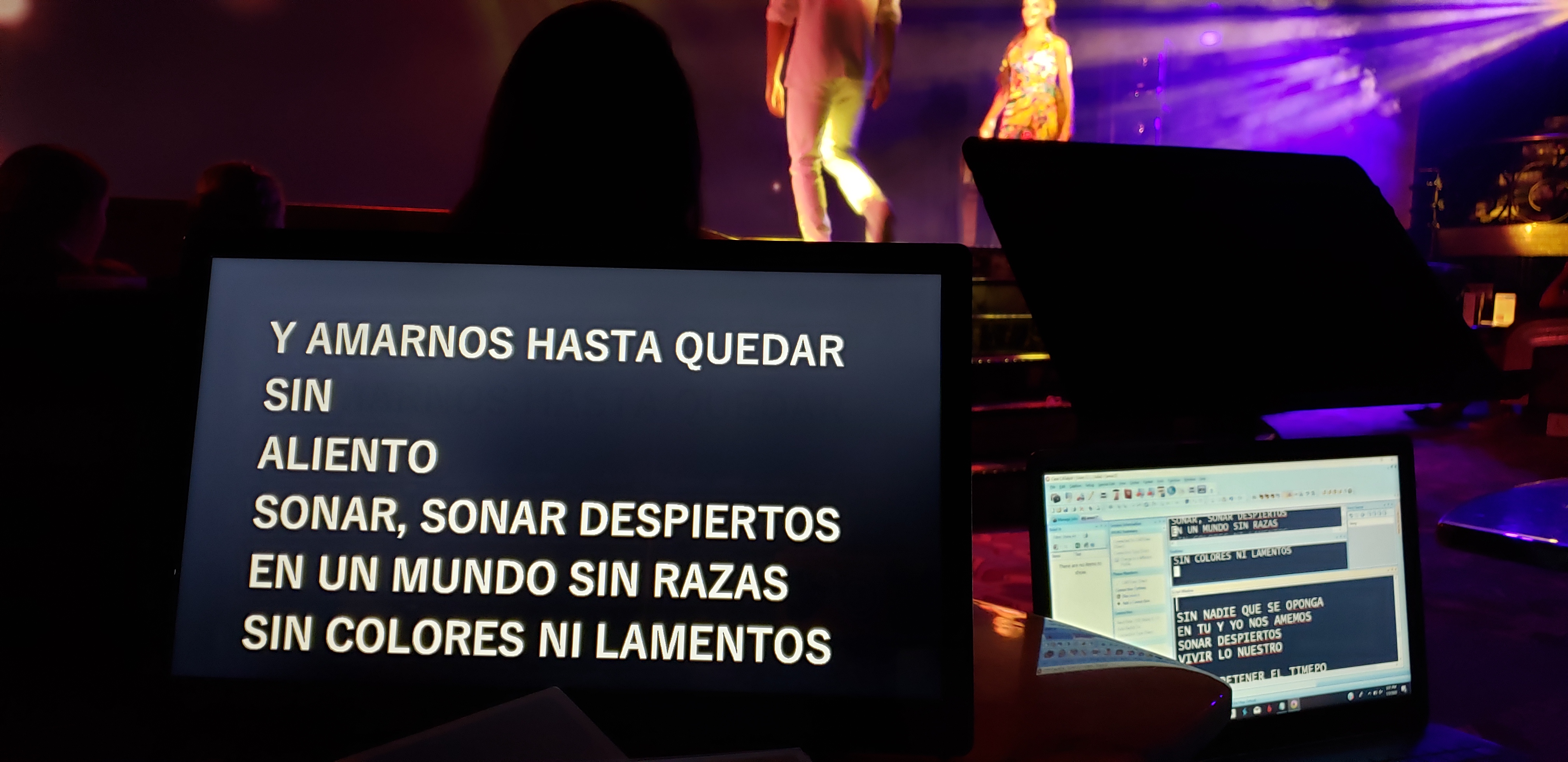

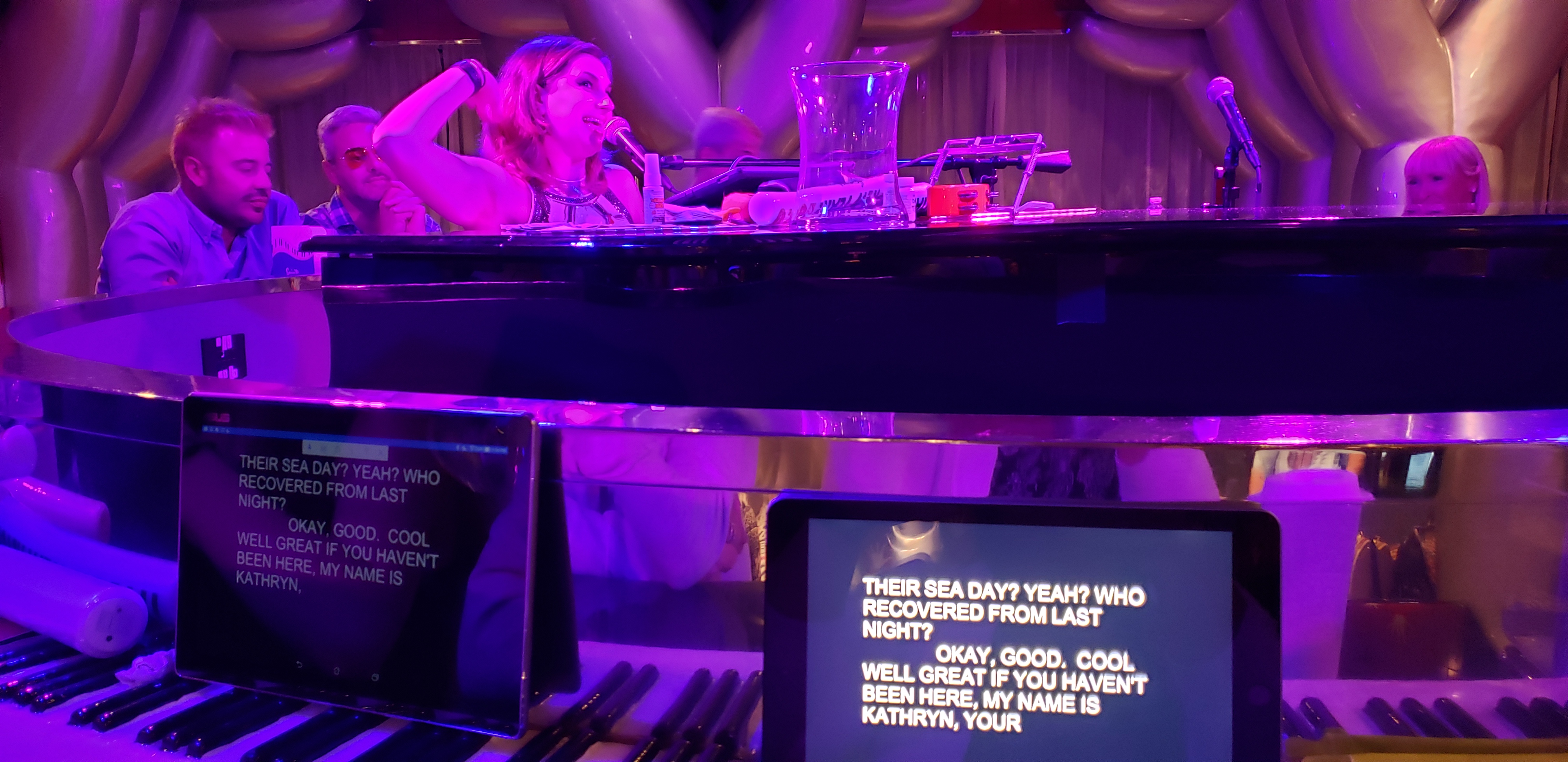

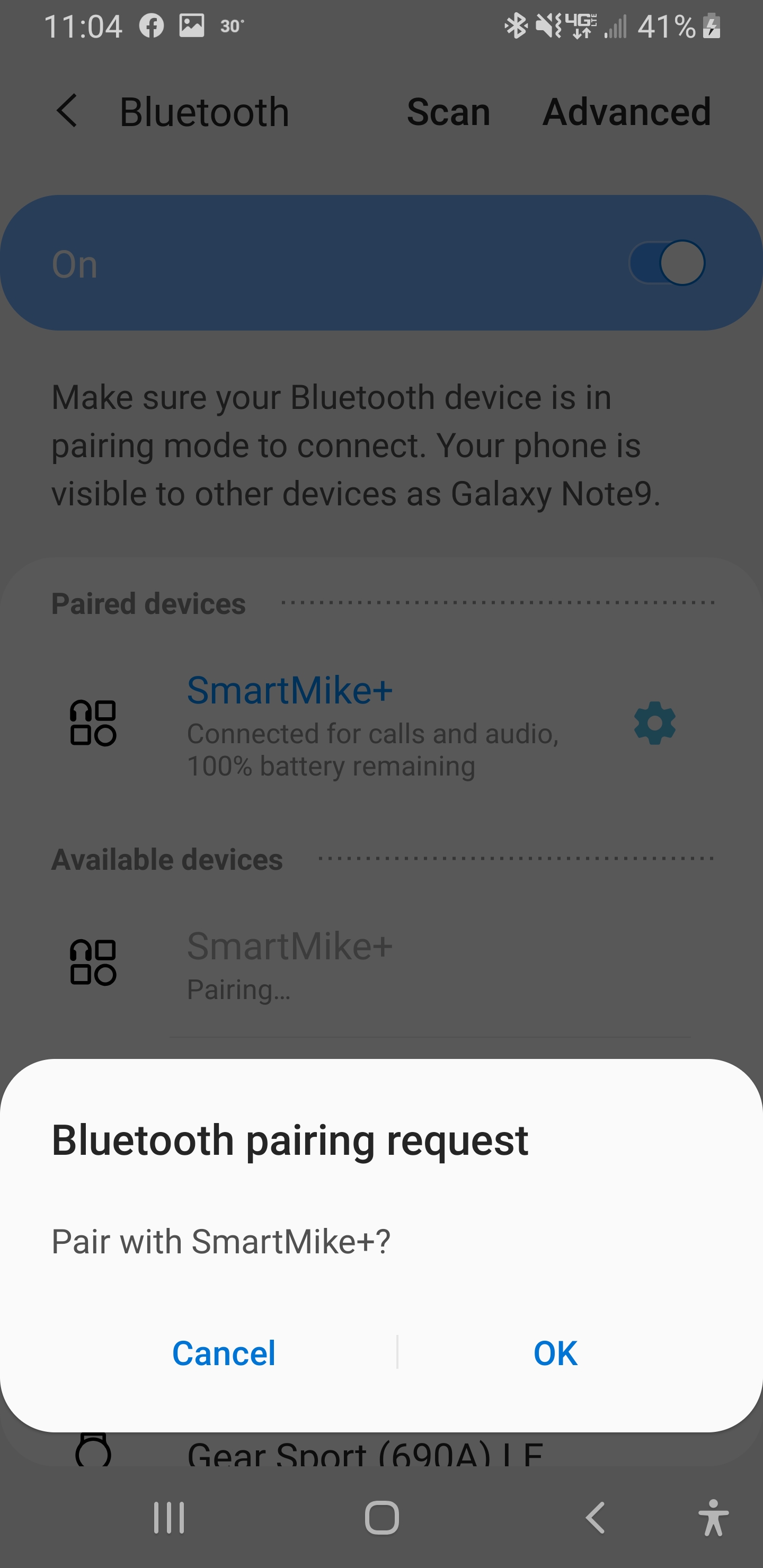

I have also used CART (Communication Access Realtime Translation), also known as captioning, for events and appreciate the access it affords me, too. It’s really a matter or preference depending on the situation and what’s available sometimes.

The devices that help me to hear

These things <pointing at my cochlear implant processors> stick to my head with magnets. Yes, I take them off when I sleep or shower or don’t want to hear babies crying on an airplane or my kids arguing. There’s an inside part that was surgically implanted and an external part which is what you see.

These things <pointing at my cochlear implant processors> stick to my head with magnets. Yes, I take them off when I sleep or shower or don’t want to hear babies crying on an airplane or my kids arguing. There’s an inside part that was surgically implanted and an external part which is what you see.

Please don’t call them “cochlear transplants”. 😳 Though I have to admit that my daughter used to call them “cochlear eggplants”.🍆

If you have a question, please ask.

I am happy to share my feelings, my experiences and my knowledge. It’s not macabre to ask. I am sure there are always questions. I would rather we talk about it (even if it’s strained) rather than make assumptions about each other. I’m pretty much an open book. LOL

Yes, I sometimes feel sorry for myself and get frustrated..

…usually when it deals with my family. Like if I didn’t understand something they said the first time which escalates into mayhem when it didn’t need to. Or I can’t understand their teacher. Or I know I’m not fully appreciating what they’re doing musically (though this gets better each time I hear them). Sometimes this frustration also extends into social situations and work situations.

…sometimes I wonder how different my life would be if I hadn’t lost my hearing.

This is something that is COMPLETELY normal when looking at the Stages of Grieving Hearing Loss and just because you feel like you got through one stage, it doesn’t mean that it won’t come back and bite you again. (FYI – the stages are denial, anger, bargaining, depression and acceptance.)

Deaf o’clock is my favorite time of the day sometimes!

This is when I take off my cochlear implants and the result is SILENCE. Like pressing the mute button. Silence to think, to ponder, to wonder, to process…and to give my brain a listening break. We ALL need these during the day, especially if it’s stressful.

It’s not all bad. I can also have the best of both worlds!

Being deaf has given me a whole new appreciation, empathy and understanding of what it is like to work with the children and adults that I do in my role as an audiologist.

I LOVE the fact that I can go back and forth between the Deaf world and the Hearing world because I can speak and because I can sign. The addition of Deaf culture adds beliefs and ideals to hold onto, to cherish, to share…and for that, I am grateful.

So, if you see me using a sign language interpreter one minute and then later see me talking on the phone, now you know why.

These things <pointing at my cochlear implant processors> stick to my head with magnets. Yes, I take them off when I sleep or shower or don’t want to hear babies crying on an airplane or my kids arguing. There’s an inside part that was surgically implanted and an external part which is what you see.

These things <pointing at my cochlear implant processors> stick to my head with magnets. Yes, I take them off when I sleep or shower or don’t want to hear babies crying on an airplane or my kids arguing. There’s an inside part that was surgically implanted and an external part which is what you see.